Nielsen Norman Group on the user experience of chatbots

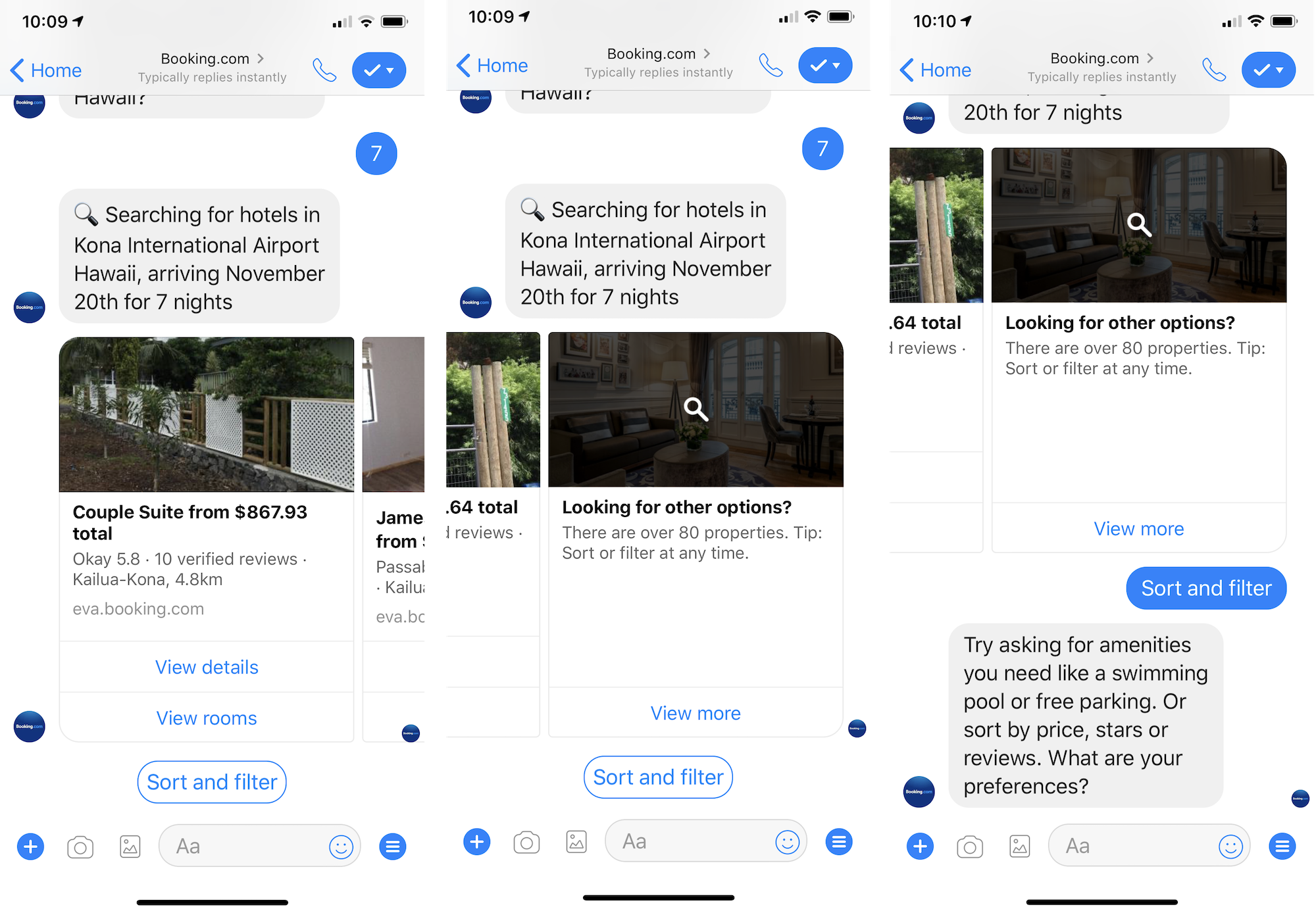

Far from being “intelligent”, today’s chatbots guide users through simple linear flows, and our user research shows that they have a hard time whenever users deviate from such flows, writes Raluca Budiu of the Nielsen Norman Group [NNGroup].

To understand the usability of chatbots, NNGroup recruited 8 US participants and asked them to perform a set of chat-related tasks on mobile (5 participants) and desktop (3 participants). Some of the tasks involved chatting for customer-service purposes with either humans or bots, and others targeted Facebook Messenger or SMS-based chatbots.

Interaction chatbots seemed to best resemble Alexa skills: they were designed to guide the user through a small number of tasks. Tasks supported by the bots are best conceptualized as linear flows with a limited number of branches that depend on the acceptable user answers. The bot asks a question and the answers serve to advance the bot on the right branch of the flow. When the user is compliant with the flow and provides “legal” answers that are in line with the system’s expectations, without jumping steps or using unknown words, the experience feels successful and smooth. However, as soon as users deviated from the prescribed script, problems occurred.